The Apple iPhone XS and the Dawn of Computational Photography

After a couple weeks with the new device, it’s the camera that’s most impressive

The hype whenever Apple introduces a new product is that it’s “revolutionary.” That’s quite literally hyperbole because very few companies debut something that truly creates a sea change with any sort of consistency. But the new iPhone XS and XS Max actually are revolutionary, especially because they herald a different kind of photography in your pocket that has never been possible before.

To understand how and why, remember that Apple’s keynote mentioned a process that takes “pre-photos” before you take the picture, and then a series of photos while actually snapping the shot. You might mistake that process for merely resulting in an HDR, which is what the iPhone X could already do. But what’s happening now is new, and it’s important to understand how and why.

Normal HDR simply stacks a series of the same images captured at different exposures together and balances shadows and highlights so, say, the sky isn’t blown out, but the details in the flower that’s your main subject aren’t too dark as a result, either.

But Apple’s approach isn’t merely balancing light and dark. It’s computational analysis of the subject’s content. Is there a face? How is it lit? What about the even-ness of skin tone? What about the colors in the rest of the scene? Are they consistent? And with two lenses instead of one, especially in Apple’s Portrait Mode, the wide-angle lens can be used to study the background while the slightly telephoto lens can be used to focus on details, like the subject’s face.

Now, this technology already existed with Portrait Mode on the prior 8 Plus and X, but what those phones didn’t have is Apple’s new, bigger sensor. Yes, the pixel count is still merely 12MP, but the pixels are larger, so they can do more work with less light, and that means Apple was able to take more shots every time you hit the shutter. More shots, more information, and more data crunching, all done by a new and far more powerful chip, the A12 Bionic which can churn a brain-melting five trillion operations per second, whereas the X’s could only sweat out 600 billion operations.

Translation: In the time it takes you to press and release the shutter button, the new A12 Bionic has mapped the depth of your subject’s visage, compared light and shadow, checked against its massive database of skin tones, and also the lifelike accuracy of the green hues in the foliage in the background just outside the window. This all happens with the front-facing camera, too.

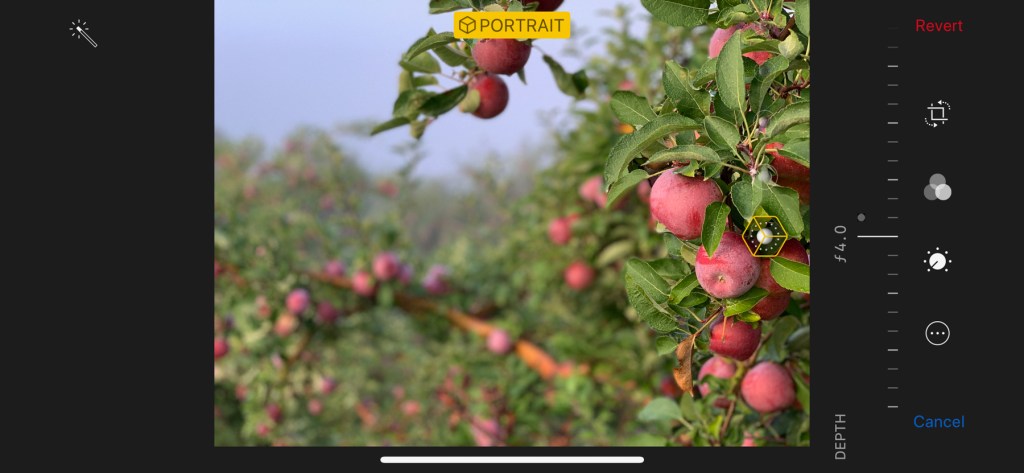

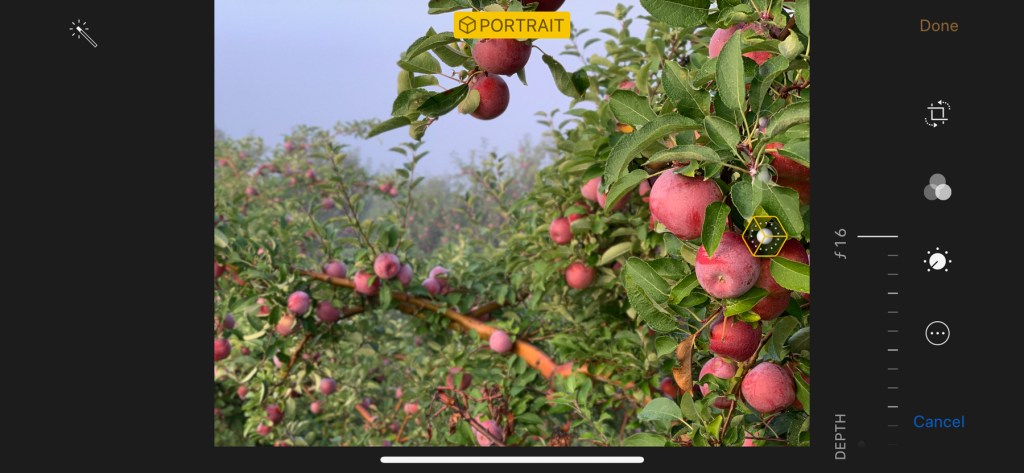

The new chip is partially why Portrait Mode works far more seamlessly. Where before this mode would struggle with autofocus and getting a fix on foreground and background, it’s much more consistent now and the new post-edit feature is a joy, because users can change modes (for instance, switching to stage lighting to pull your subject forward) and change the depth of field after the fact, to blur the background exactly as much or as little as you please.

Apple says they studied the effect of 50mm DSLR portrait lens bokeh (that soft-focus behind a subject you get at short depth of field) to dial in the right feel. They also apparently heard some complaints from photo nerds that subjects could look cropped forward in the image—almost like a cardboard cut-out against the backdrop—which has been solved almost entirely.

One complaint (explained in great depth over at Halide) is that the impact of fusing multiple images together and massaging them greatly with algorithms is that Apple’s default is too much softness—especially in selfies, also bringing too much light into shadows and over-eliminating harshness.

We get it, and we’d hardly quibble with that argument. Apple will say that not only are the new pixel-sensors broader, for greater light sensitivity, but deeper as well, to render color accuracy across a broader spectrum, but Halide also points out that the new camera wants to default to faster shutter speeds and higher ISO—and that means they have to knock all that contrast and noise down with one trillion operations. (And don’t forget that Halide’s solution, one we don’t think is necessarily wrong, is to sell you an app that lets you take RAW images and edit the noise and whatever else you want to revise after the fact.)

Then again, when we shot a time-lapse sequence using Apple’s own app it actually pulled more light into shaded walkers beneath a construction canopy on New York’s High Line, without blowing out the sky to the north. We took the same sequence using Nikon’s new Z7 mirrorless, which balanced the sky, but left the walkers in shadow. Sure, we could fix that in post with software, but the point is, if you want to share a cool time-lapse from your vacation on Instagram pronto, it’s not only more convenient to shoot with your phone, in some instances it produces something superior.

Meanwhile, not everything Apple’s done can miraculously repair what is after all a few tiny drill-hole sized lenses and a sensor smaller than the tip of an infant’s pinky toe. Our night shots look okay on the amazingly bright new XS screen, but all the edges are actually very soft and painterly when you eyeball the work on a larger desktop monitor. Hey, it’s a quarter-second exposure at the very limits of the f/1.8 lens, snapped without using a tripod, so it’s hardly shabby, but a $600 base-level DSLR on a tripod would make something more usable, because some physics still apply to photography.

One final note, not about photography, but connectivity. Which is almost an overlooked attribute of a device that, yeah, was originally supposed to be about making phone calls and texts. Happily, we’ve experienced far fewer dead zones in cell reception on the new XS, thanks to a redesigned antenna enclosure. Now, what we can’t wait to try is Apple’s new Dual SIM set-up that debuts later this fall with an update, which should be a boon to lowering roaming charges when we’re on the road.

COOL HUNTING always gets permission to use the images we publish; however, as an independent publication, we cannot afford to continue fighting unfair claims of copyright infringement, so the images have been removed from this post.